What’s Left in the Carbon Budget Before It’s Too Late?

Passing the 400ppm milestone, we head for 2°C warmer Jun 6 2013It is only six pages long — four if one counts only the main text and not the notes and references — yet it has evidently become the most influential paper in the environmental science world. What gives it such gravitas, of course, is not just the intensive computer modeling that led to its conclusions, but its way of quantifying the threat of global warming.

Published four years ago in the April 2009 issue of Nature, the preeminent science weekly, the paper is dense with the terminology of climate science and therefore largely unknown outside scientific circles, but — analogous to Google rankings — the number of references to the paper in other scientific papers and articles puts it in the top 0.1% of environmental articles and just shy of the top .001%. If citations in books, professional societies and university websites, etc. are included, as does Google Scholar, the number of referrals more than doubles.

Titled “Greenhouse-gas emission targets for limiting global warming to 2°C”, or Celsius, which is 3.6° Farenheit, it is the result of research by eight scientists from Germany, the UK and Switzerland. At the time, more than 100 countries had adopted a 2°C rise in warming above pre-industrial levels as a guiding limit beyond which they agreed that the planet would suffer irreversible damage. That number since rose to 167 nations signing on at the Copenhagen Accord. But the amount of emissions that would equate to that 2°C rise (and beyond) was poorly understood, said the group, and that is what they set out to solve when they began work in 2006.

What they found is grim. Using the novel metaphor of a budget — a carbon budget spanning the years 2000 to 2050 — they said that for humans to have only a 50% chance of holding to the 2°C limit, we will need to restrict carbon dioxide emissions to 1,440 billion tons (gigatons) across that entire timespan. (That probability enters the mix owes to the many other variables affecting climate.)

To improve those odds, the budget would of course need to be cut back further. The paper further foresees that we would need to restrict emissions to 1,000 gigatons is we choose to aim for only a 25% likelihood of going above 2°C, or to 890 gigatons to reduce the chance of exceeding 2°C to 20%.

Trouble is, around 234 gigatons of CO2 had already been emitted in just the period between 2000 and 2006, says the paper. The authors say that, continuing at the average annual emission rate of those years — 36.3 gigatons — we will run through those carbon budgets that give us a 20%, a 25% or at worst a 50% chance of not exceeding a 2°C temperature increase by 2024, 2027 or 2039, respectively. So in a mere eleven years, there is a 20% chance that we will cross the 2°C divide.

Actually the prognosis is worse still. Rather than the steady rate of 36.3 gigatons emitted each year, the industrial expansion of China and, to a lesser extent, India, have led to increases in annual emissions beyond the 36.3 gigatons that the scientists used for projecting — increases drain the budgets sooner.

Thus are we are confronted with the consequence of ignoring the global warming alarms raised for over three decades. This article reports that scientists at NASA’s Goddard Spaceflight Center warned in the journal Science of global warming of ''almost unprecedented magnitude'' in the 21st century that could ''flood 25 percent of Louisiana and Florida, 10 percent of New Jersey and many other lowlands throughout the world''. The article dates from August, 1981.

We have since seen an enormous outpouring of such articles, talks, documentaries and demonstrations foretelling this calamity, yet the world has done nothing on the scale needed to head it off or, as with the United States, has largely looked the other way.

That article was written when the concentration of CO2 in the atmosphere was 335 to 340 parts per million (ppm), which itself had risen well above the pre-industrial level of 280 ppm a century earlier. And in the middle of this May, the measurement at the station atop Mauna Loa in Hawaii, which has been monitoring the Pacific air for half a century, crossed the 400 ppm threshold.

consensus

Meanwhile, comes a study just published that is the most comprehensive survey of climate research to date. It analyzed all the scientific papers on climate published between 1991 and 2011. Of those that expressed an opinion, 97.1% attributed rising temperatures to human causes. But skeptics say there has been no increase in temperature (which we reported here) , pointing to the last decade in which average global temperatures plateaued and dismissing the rising temperatures in the years before that to natural causes.

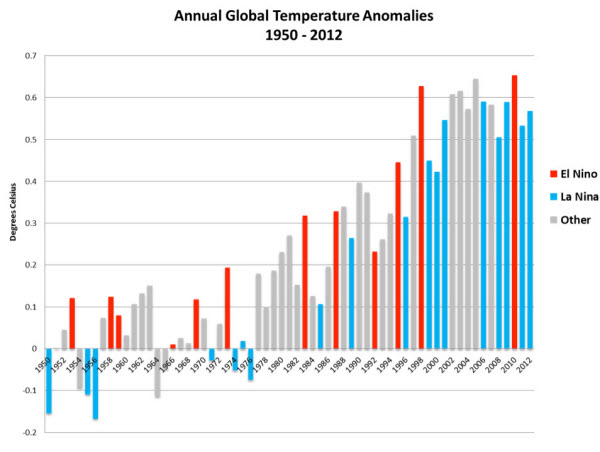

By "anomalies" is meant each year's increase or decrease in temperature.

They further dispute the accuracy of computer modeling, although its abandonment would leave no way to consider the future other than waiting for it to happen.

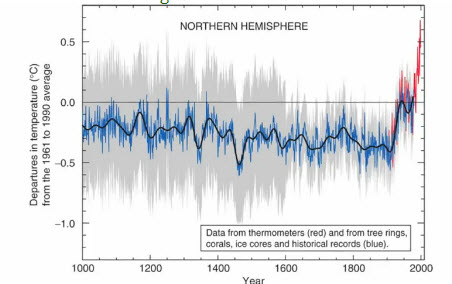

Temperature measuresments across the last 1,000 years, with the steep spike at the end.

But a factor that has certainly persuaded the scientific community of the validity of the European study is the huge number of constraints, variables and iterations that it represents. To give a little of the flavor of that work, this except from a section titled “Methods”:

“Out of more than 400 parameters, we vary 9 climate response parameters, (one of which is climate sensitivity), 33 gas-cycle and global radiative forcing parameters (not including 18 carbon-cycle parameters…) and 40 scaling factors… To constrain the parameters we use observational data of surface air temperatures…from 1850 to 2006, the linear trend in ocean heat content changes from 1961 to 2003 and year 2005 forcing estimates for 18 forcing agents…With a Metropolis-Hastings Markkov chain Monte Carlo approach, based on a large ensemble (>3 x 10^6) of parameter sets using 45 parallel Markov chains with 75,000 runs each...”

And so on. You don’t need to know what all this means (nor do we know) to realize that the study was enormous.

Bill McKibben, co-founder of 350.org and today’s leading environmental activist, has evidently been influenced by this study. In a highly recommended article last August in Rolling stone he referred to “one of the most sophisticated computer-simulation models that have been built by climate scientists around the world over the past few decades” and the fact that he picks up the same approach of a “carbon budget”suggests he is referring to the 2009 European study. McKibben is currently leading the charge against authorization of the Keystone XL pipeline, against which his organization plans demonstrations across the country this summer.

leave it in the groundThe authors gathered estimates from the literature on the subject of all proven fossil fuel reserves that could be extracted “given today’s techniques and prices”. In their estimate, if that entire world inventory were then burned, it would equate to 2,800 gigatons of CO2. This is the same as the amount estimated by a UK-based outfit named Carbon Tracker Initiative, who say that figure doesn’t even include unconventional sources like tar sands, oil shale and methane hydrates.

Either way, 2,800 is well above the budget figures above. If all those reserves were extracted and burned, that would be double the worst case of 1,440 gigatons that lead to a only 50-50 chance of staying at no more than a 2°C temperature rise. Which raises an interesting prospect. If and as the global temperature verges toward that benchmark over the coming decade, what if some of those 167 countries begin to panic — fearing rising seas, violent storms, agricultural drought and an inability to feed their people. What if they were to begin to impose severe emission constraints that leave those hydrocarbons in the ground? McKibben cites a former managing director at JP Morgan who calculated that at today's market value those reserves are worth about $27 trillion. What will then become of the market value of some of the world’s biggest companies — and the wealth of countries that are little more than fossil fuel companies — that are banking on a business-as-usual future in which the world goes on forever buying and burning those reserves?

Carbon Tracker’s mission is to quantify the “carbon embedded in equity markets” and the risks to the fossil fuel companies in what could become “unburnable carbon” — that is, reserves that make up much of the valuation of those companies but would become worthless if left in the ground. McKibben’s 350.org has a similar mission to begin that devaluation by getting universities, religious institutions and state and city governments to divest their holdings of fossil fuel companies with the slogan “It’s wrong to profit from wrecking the climate”.

In recognition that so much of what is below ground will have to stay there, shouldn’t the public companies begin to work down their market capitalization by returning value to their shareholders? Instead, they continue to do the opposite. In 2012 they paid out $126 billion to shareholders while, says Carbon Tracker, they go on spending on exploration and development of reserves year after year — $$674 billion last year alone — to find what could become stranded assets. The Economist wonders whether companies have decided that governments will be unable to impose limits on publics unwilling to give up any amenities, and that we will go on to burn all their reserves after all, cooking the Earth and leaving that wishful 2°C increase far behind. Or, they intend to accomplish the same objective by promoting geo-engineering. That idea says we could cool the planet by darkening the skies to block out the Sun.

Please subscribe if you haven't, or post a comment below about this article, or

click here to go to our front page.

Yes, the chart with its steep uptick is truly frightening. Fortunately it’s also complete bunkum. It is none other than Michael Mann’s infamous, and now totally discredited, “hockey stick” graph, once the poster-child of the IPCC, now quietly discarded by them.

It’s the graph that managed to “disappear” both the MWP and the LIA; it’s the chart that was arrived at by grafting real temperature readings onto cherry-picked proxy data without actually mentioning the fact, and it’s the output of a computer program that will produce an “uptick” effect even if you feed random numbers into it.

It is not possible for humans to significantly increase the amount of CO2 in the atmosphere, regardless of what, or how much, we burn. The quantity of CO2 in the atmosphere is directly proportional to the pressure differential between the atmosphere and the oceans, and inversely proportional to the temperature of the oceans.

Henry’s Law – one of the Noble Gas Laws.

Put simply, anything that happens (including human activity) that leads to an increase in the amount of CO2 (or any other gas) in the atmosphere, all other things being equal, will simply lead to more CO2 being dissolved in the oceans, provided the temperature of the ocean remains constant.

Conversely, the warmer the oceans get, the less CO2 (or any other gas) can be dissolved there, regardless of the pressure differential.

These two forces work perpetually in conflict with each other, perpetually striving for an unobtainable equilibrium.

Ocean temperatures have generally been rising since the end of the LIA circa 1850. Consequently, the oceans have been outgassing CO2 leading to increased atmospheric concentrations. It has absolutely nothing to do with humans.

With the recent passing of the solar maximum we are now in a 150 year global cooling phase, and the oceans are now losing heat. Within five years atmospheric concentration of CO2 will begin to fall, as the cooling oceans, instead of outgassing CO2, will dissolve more of it, regardless of how much puny humans produce. All our miniscule production will do is ever so slightly slow down the rate of drop in atmospheric concentration.

There is a downside to all this. Increased atmospheric CO2 concentration has led to a 22% increase in yield from field crops such as wheat, rice, corn etc. The next 20 years will see that increase lost, and 1.5 billion people will die from starvation.

Coupled with the couple of billion who will die of starvation as a result of reduced growing seasons, things look bleak.

History will not look kindly on our current generation of “Greenies”, who not only allowed it to happen, but campaigned for it to be so.

One correction: The validity of the Michael Mann graph called “bunkum” and “now totally discredited” above was in fact affirmed in 2006 by a special committee of the National Academy of Sciences (U.S.) formed to study the controversy. That was reported in Nature here, but Nature has a pay-wall. As an alternative, this article has a summation for those interested. If the commenter has a more recent report from an equally valid source that reverses the NAS findings, we will retract this correction.

“… a study just published … analyzed all the scientific papers on climate published between 1991 and 2011. Of those that expressed an opinion, 97.1% attributed rising temperatures to human causes.”

Presumable you refer to the Lewandowsky paper. This study was based on cherry-picked respondents who are paid researchers in the area of climate change. Known skeptics were invited to participate, but the majority declined because of previous experiences.

The percentage figure you quote, means 97.1%, of those working in the field, and who also chose to respond to the survey.

It does not include those directly working in the field (but not invited to respond), nor does it include those working in the field who declined to respond, nor does it include skeptics and those working in other fields, who declined to respond.

I am amazed that the survey results show that 2.9% percent of the carefully selected respondents managed to get the answer wrong.

Thank you for introducing us to the Lewandowsky Papers, which report on an opinion survey of a year ago by a psychologist about attitudes toward climate science and other matters. Rather, we refer to a study which, as our text says, was just released and which analyzed the text of published scientific papers on climate across a decade. We should have provided a link. A report of the study can be found here

We humans not only survived the Middle ages warm period and the Roman era warm period but prospered during those times and no 25% of Fla.,La,.and NJ were not under water. However Greenland was green. No ice cover people lived there. The remnants of these communities are still under the ice. This is verified history not a computer program generated hypotheses. The scientist do not know what will happen but they know how to milk the governments of the world for money and influence. They are not supported by history.

Even if warming had nothing to do with mankind, all those scientists would still be doing the same work to measure and forecast what is happening, because it is a huge problem humanity faces. So claiming that they attribute global warming to humanity in order to get money is a red herring. You are just repeating denier propaganda.

Climate change has been going on for about 4 billion years. The changes were and continue to be made naturally. I propose that the IPCC-endorsed media blitz be re-channeled to urge the 7 billions of us to ‘adjust’ accordingly. Five to one calorie ratio of Fossil fuel use for input, to alternative energy device’s output is required. All media paranoia now blitzing the Homo sapiens about climate change is counter productive – except that it keeps a large number of climate scientists on ‘white-collar welfare.’

That Earth’s climate has been changing throughout its 4 billion years – how irrelevant! The issue is the climate *now*, and the climate humans need to survive. The chart in the article of the temperature across the last thousand years shows clearly the steep rise in temperature that has exactly paralleled the industrial age and the burning of fossil fuels. That the energy content of those fuels far exceeds current alternatives is undeniable and perhaps adapting is all that is left to us, but what is it that causes deniers to refuse the scientific evidence that human activity is the most likely cause of warming? Do they think that decades of burning buried hydrocarbons into the atmosphere has no effect?